The College of Engineering HPC cluster is a heterogeneous mix of about 130 servers providing over 5500 CPU cores, over 250 GPUs, and over 50 TB total RAM. The systems are connected via gigabit ethernet and Infiniband. Most of the latest servers utilize Mellanox EDR or HDR InfiniBand network connection. The cluster also has access to 500TB global fast scratch storage from DDN. The CoE HPC Cluster is rated at nearly 2000 peak TFLOPS (double-precision).

Summary of HPC cluster:

Submit Hosts (submit-a, b, c)

| Model | Dell R740 |

| Processor | Dual Processor 16 Core 2.10 Ghz Intel Xeon Gold 6130 with 22528 KB cache |

| Memory | 256 GB RAM |

| Scratch storage | n/a |

| NIC |

4x Broadcom NetXtreme-E dual 10-Gigabit Ethernet Adapter Mellanox EDR InfiniBand |

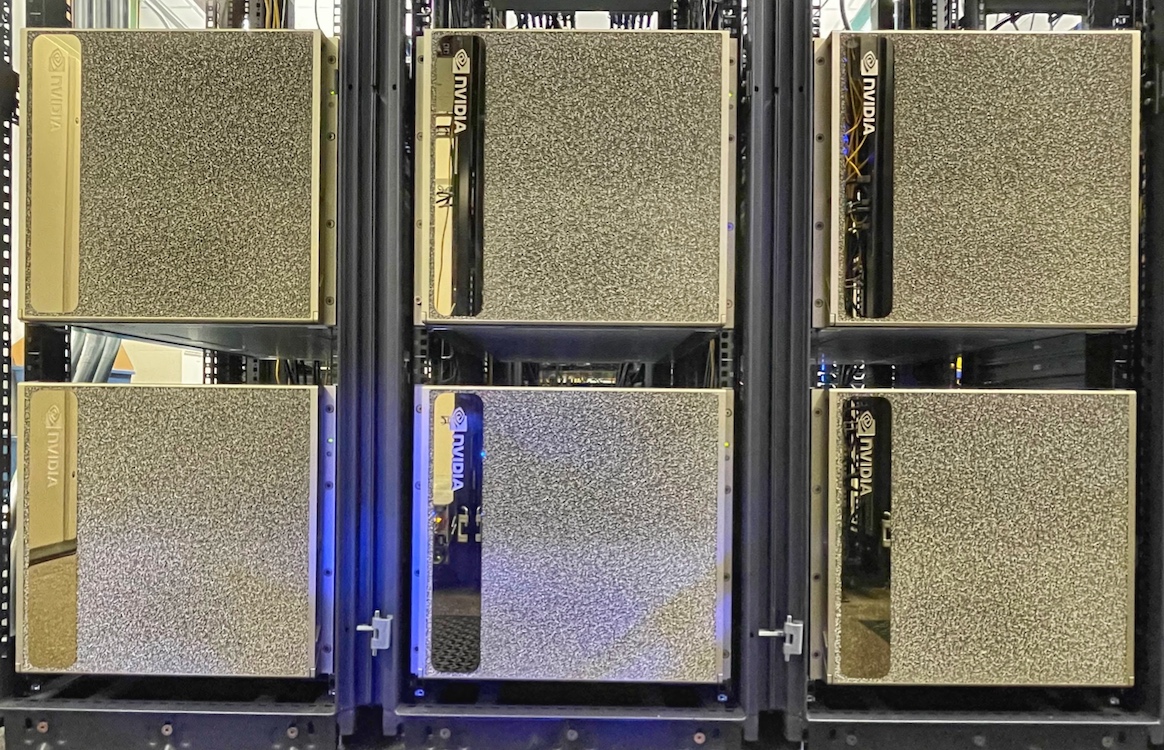

4 Nvidia DGX H100/H200 nodes (dgxh-[1-4])

| Model | Nvidia DGX H100/H200 |

| Processor | 2x 56-core 2.0 Ghz Intel Xeon Platinum 8480CL CPU with 105 MB cache |

| GPUs | 8 Nvidia H100 w/ 80GB VRAM (dgxh-[1-3] or 8 Nvidia H200 w/ 140GB VRAM (dgxh-4) |

| Memory | 2.0 TB RAM @4800 MT/s |

| Scratch storage | 24 TB NVME |

| NIC |

Mellanox 100G ethernet Mellanox HDR Infiniband |

5 Nvidia DGX2 nodes (dgx2-[1-5])

| Model | Nvidia DGX2 |

| Processor | 2x 24-core 2.70 Ghz Intel Xeon Platinum 8168 CPU with 33792 KB cache |

| GPUs | 16 Nvidia Tesla V100 w/ 32GB VRAM |

| Memory | 1.5 TB RAM @2666 MT/s |

| Scratch storage | 28 TB NVME |

| NIC |

Mellanox 100G ethernet Mellanox EDR Infiniband |

2 Intel Sapphire-lake nodes

cn-w-[1-2]

| Model | 2x Dell PowerEdge R760xa |

| Processor | 20-core 2.20 GHz Intel Xeon Platinum 8462Y+ with 2x120 MB L3 cache |

| GPUs | 2 Nvidia H100 w/ 80GB VRAM (cn-w-1) or 4 Nvidia L40S w/ 48GB VRAM (cn-w-2) |

| Memory | 512 GB RAM @4800 MT/s |

| Scratch storage | 3.5 TB NVME |

| NIC |

2x Intel X550T 10-Gigabit Ethernet Adapter ConnectX-6 Infiniband |

26 AMD EPYC compute nodes:

cn-x-1

| Model | Dell XE7745 |

| Processor | 2x 128-core 2.70 GHz AMD EPYC 9755 with 512 MB L3 cache |

| GPUs | 8 Nvidia H100 w/ 140GB VRAM |

| Memory | 512 GB RAM @4800 MT/s |

| Scratch storage | 3.5 TB NVME |

| NIC |

ConnectX-6Dx 100-Gigabit Ethernet Adapter ConnectX-7 NDR Infiniband |

cn-gpu[10-12]

| Model | 3x Exxact GPU servers |

| Processor | 2x 64-core 2.45 GHz AMD EPYC 7763 w/ 512 MB L3 cache |

| GPUs | 8x Nvidia L40S w/ 48GB VRAM |

| Memory | 1024 GB RAM @3200 MT/s |

| Scratch storage | 894 GB |

| NIC |

Broadcom BCM57416 NetXtreme-E Ethernet Adapter Mellanox ConnectX-6 Infiniband |

cn-v-[1-9]

| Model | 8x Dell PowerEdge R6625 |

| Processor | 2x 48-core 3.6 GHz AMD EPYC 9474F w/ 512 MB L3 cache |

| GPUs | n/a |

| Memory | 384 GB RAM @4800 MT/s |

| Scratch storage | 400 GB |

| NIC |

Broadcom BCM57416 NetXtreme-E Ethernet Adapter Mellanox ConnectX-6 Infiniband |

cn-u-[1-2]

| Model | 2x Dell PowerEdge R6525 |

| Processor | 2x 32-core 2.8 GHz AMD EPYC 7543 w/ 32768 KB cache |

| GPUs | n/a |

| Memory | 512 GB RAM @3200 MT/s |

| Scratch storage | 400 GB |

| NIC |

Broadcom BCM57416 NetXtreme-E Ethernet Adapter Mellanox ConnectX-6 Infiniband |

cn-t-1

| Model | 1x Dell PowerEdge R7525 |

| Processor | 2x 24-core 3.2 GHz AMD EPYC 7313 w/ 32768 KB cache |

| GPUs | 3x Nvidia A40 w/ 48 GB VRAM |

| Memory | 256 GB RAM @3200 MT/s |

| Scratch storage | 844 GB |

| NIC |

Broadcom BCM5720 NetXtreme-E Ethernet Adapter Mellanox ConnectX-6 Infiniband |

cn-s-[1-5]

| Model | 5x Dell PowerEdge R7525 |

| Processor | 2x 24-core 3.2 GHz AMD EPYC 74F3 w/ 32768 KB cache |

| GPUs | 2x Nvidia A40 w/ 48 GB VRAM |

| Memory | 256 GB RAM @3200 MT/s |

| Scratch storage | 844 GB |

| NIC |

Broadcom BCM57416 NetXtreme-E Ethernet Adapter Mellanox ConnectX-6 Infiniband |

cn-r-[5-6]

| Model | 2x Dell PowerEdge R7525 |

| Processor | 2x 32-core 2.6 GHz AMD EPYC 7513 w/ 32768 KB cache |

| GPUs | 2x Nvidia A40 w/ 48 GB VRAM |

| Memory | 256 GB RAM @3200 MT/s |

| Scratch storage | 725 GB |

| NIC |

Broadcom BCM5720 NetXtreme-E Ethernet Adapter Mellanox ConnectX-6 Infiniband |

cn-r-[1-4]

| Model | 4x Dell PowerEdge R7525 |

| Processor | 2x 24-core 3.2 GHz AMD EPYC 7F72 w/ 16384 KB cache |

| GPUs | 2x Nvidia A40 w/ 48 GB VRAM |

| Memory | 256 GB RAM @3200 MT/s |

| Scratch storage | 725 GB |

| NIC |

Broadcom BCM5720 NetXtreme-E Ethernet Adapter Mellanox ConnectX-6 Infiniband |

44 Skylake/Cascadelake compute nodes:

sail-gpu0

| Model | Dell PowerEdge DSS8440 |

| Processor | 2x 24-core 3.0 GHz Intel Xeon Gold 6248R w/ 36608 KB cache |

| GPUs | 8x Nvidia A40 w/ 48 GB VRAM |

| Memory | 768 GB RAM @2933 MT/s |

| Scratch storage | 7.0 TB SSD |

| NIC |

Intel I350 Gigabit Ethernet Adapter Mellanox ConnectX-5 Infiniband |

soundwave

| Model | Dell PowerEdge R740xd2 |

| Processor | 2x 10-core 2.40 GHz Intel Xeon Silver 4210R w/ 14080 KB cache |

| Memory | 384 GB RAM @2666 MT/s |

| Scratch storage | 5.3 TB SSD |

| NIC |

Broadcom NetXtreme BCM5720 Mellanox ConnectX-5 Infiniband |

optimus

| Model | Dell PowerEdge DSS8440 |

| Processor | 2x 20-core 2.10 GHz Intel Xeon Gold 6230 w/ 28160 KB cache |

| GPUs | 8x Nvidia Quadro RTX 6000 w/ 22GB VRAM |

| Memory | 768 GB RAM @2933 MT/s |

| Scratch storage | 345 GB SSD |

| NIC |

Intel X710 10-Gigabit Ethernet Adapter Mellanox ConnectX-5 Infiniband |

cn-gpu[6-7]

| Model | Dell PowerEdge DSS8440 |

| Processor | 2x 24-core 3.0 GHz Intel Xeon Gold 6248R w/ 36608 KB cache |

| GPUs | 8x Nvidia Quadro RTX 8000 w/ 44 GB VRAM |

| Memory | 768 GB RAM @2933 MT/s |

| Scratch storage | 7.0 TB SSD |

| NIC |

Intel X710 10-Gigabit Ethernet Adapter Mellanox ConnectX-5 Infiniband |

cn-gpu5

| Model | Dell PowerEdge DSS8440 |

| Processor | 2x 20-core 2.50 GHz Intel Xeon Gold 6248 w/ 28160 KB cache |

| GPUs | 8x Nvidia Quadro RTX 8000 w/ 44 GB VRAM |

| Memory | 768 GB RAM @2933 MT/s |

| Scratch storage | 7.0 TB SSD |

| NIC |

Intel X710 10-Gigabit Ethernet Adapter Mellanox ConnectX-5 Infiniband |

cn-q-[1-2]

| Model | 2x Dell PowerEdge R640 |

| Processor | 2x 24-core 3.0 GHz Intel Xeon Gold 6248R w/ 36608 KB cache |

| Memory | 192 GB RAM @2933 MT/s |

| Scratch storage | 345 GB |

| NIC |

Broadcom BCM5720 NetXtreme-E 10-Gigabit Ethernet Mellanox ConnectX-6 Infiniband |

cn-p-1

| Model | Dell PowerEdge R740xd |

| Processor | 2x 24-core 3.0 GHz Intel Xeon Gold 6254R w/ 36608 KB cache |

| GPUs | 1x Nvidia Tesla V100S w/ 32 GB VRAM |

| Memory | 768 GB RAM @2933 MT/s |

| Scratch storage | 345 GB |

| NIC |

Broadcom BCM5720 NetXtreme-E Ethernet Adapter Mellanox ConnectX-6 Infiniband |

cn-o-1

| Model | Dell PowerEdge R740 |

| Processor | 2x 24-core 3.0 GHz Intel Xeon Gold 6254R w/ 36608 KB cache |

| Memory | 192 GB RAM @2933 MT/s |

| Scratch storage | 345 GB |

| NIC |

Broadcom BCM5720 NetXtreme-E 10-Gigabit Ethernet Mellanox ConnectX-6 Infiniband |

cn-n-[1-6]

| Model | 6x Dell PowerEdge R640 |

| Processor | 2x 18-core 3.10 GHz Intel Xeon Gold 6254 w/ 25344 KB cache |

| Memory | 192 GB RAM @2933 MT/s |

| Scratch storage | 345 GB |

| NIC |

Broadcom BCM57416 NetXtreme-E Mellanox ConnectX-5 Infiniband |

cn-m-1

| Model | Dell PowerEdge R740xd |

| Processor | 2x 4-core 3.80 GHz Intel Xeon Gold 5222 w/ 16896 KB cache |

| GPUs | 6x Nvidia Tesla T4 w/ 15 GB VRAM |

| Memory | 192 GB RAM @2933 MT/s |

| Scratch storage | 155 GB SSD |

| NIC |

Broadcom NetXtreme BCM5720 Ethernet Adapter Mellanox ConnectX-3 Infiniband |

cn-m-2

| Model | Dell Precision 7920 |

| Processor | 2x 8-core 2.50 GHz Intel Xeon Silver 4215 w/ 11264 KB cache |

| GPUs | 2x Nvidia Quadro RTX 6000 w/ 24 GB VRAM |

| Memory | 192 GB RAM @2666 MT/s |

| Scratch storage | 165 GB SSD |

| NIC |

Intel I350 Gigabit Ethernet Adapter Mellanox ConnectX-5 Infiniband |

cn-e[41, 43, 44]

| Model | 4x Dell PowerEdge R740 |

| Processor | 2x 22-core 2.10 GHz Intel Xeon Gold 6152 w/ 30976 KB cache |

| Memory | 128-768 GB RAM @2666 MT/s |

| Scratch storage | 346 GB |

| NIC |

Mellanox ConnectX-4 Ethernet Mellanox ConnectX Infiniband |

cn-e[31-34]

| Model | 4x Dell PowerEdge R740 |

| Processor | 2x 16-core 2.10 GHz Intel Xeon Gold 6130 w/ 22528 KB cache |

| Memory | 512 GB RAM @2666 MT/s |

| Scratch storage | 346 GB |

| NIC |

Broadcom BCM57416 NetXtreme-E 10-Gigabit Ethernet Mellanox ConnectX-3 Infiniband |

cn-e[21-24]

| Model | 4x Dell PowerEdge R640 |

| Processor | 2x 14-core 2.20 GHz Intel Xeon Gold 5120 w/ 19712 KB cache |

| Memory | 256 GB RAM @2666 MT/s |

| Scratch storage | 259 GB |

| NIC |

Broadcom BCM57416 NetXtreme-E 10-Gigabit Ethernet Mellanox ConnectX Infiniband |

cn-e[14-15]

| Model | 2x Dell PowerEdge R740 |

| Processor | 2x 12-core 2.60 GHz Intel Xeon Gold 6126 w/ 19712 KB cache |

| Memory | 384 GB RAM @2666 MT/s |

| Scratch storage | 259 GB |

| NIC |

Broadcom BCM57416 NetXtreme-E 10-Gigabit Ethernet Mellanox ConnectX-3 Infiniband |

cn-e[11-13]

| Model | 3x Dell PowerEdge R740 |

| Processor | 2x 12-core 2.10 GHz Intel Xeon Silver 4116 w/ 16896 KB cache |

| Memory | 256 GB RAM @2666 MT/s |

| Scratch storage | 282 GB |

| NIC |

Intel X550T 10-Gigabit Ethernet Mellanox ConnectX-3 Infiniband |

cn-e[01-10]

| Model | 4x Dell PowerEdge R740 |

| Processor | 2x 10-core 2.20 GHz Intel Xeon Silver 4114 w/ 16896 KB cache |

| Memory | 128 GB RAM @2666 MT/s |

| Scratch storage | 203 GB |

| NIC |

Broadcom BCM57416 NetXtreme-E 10-Gigabit Ethernet Mellanox ConnectX-3 Infiniband |

23 Dell Intel Haswell/Broadwell compute nodes:

cn-d31

| Model | Dell PowerEdge R930 |

| Processor | 2x 18-core 2.10 GHz Intel Xeon E5-2695v4 w/ 46080 KB cache |

| Memory | 768 GB RAM @2400 MT/s |

| Scratch storage | 203 GB |

| NIC |

Broadcom BCM57800 NetXtreme II 1/10-Gigabit Ethernet Mellanox ConnectX-4 Infiniband |

cn-d21

| Model | Dell PowerEdge R930 |

| Processor | 2x 8-core 3.20 GHz Intel Xeon E5-2667v4 w/ 25600 KB cache |

| Memory | 512 GB RAM @2400 MT/s |

| Scratch storage | 279 GB |

| NIC |

Broadcom BCM57800 NetXtreme II 1/10-Gigabit Ethernet Mellanox ConnectX-4 Infiniband |

cn-d[11-13]

| Model | 3x Dell PowerEdge R930 |

| Processor | 2x 20-core 2.10 GHz Intel Xeon E7-8870v4 w/ 51200 KB cache |

| Memory | 512-1536 GB RAM @2400 MT/s |

| Scratch storage | 203 GB |

| NIC |

Broadcom BCM57800 NetXtreme II 1/10-Gigabit Ethernet Mellanox ConnectX-4 Infiniband |

cn-d01

| Model | Dell PowerEdge R930 |

| Processor | 2x 14-core 2.00 GHz Intel Xeon E7-4830v4 w/ 35840 KB cache |

| Memory | 384 GB RAM @2400 MT/s |

| Scratch storage | 203 GB |

| NIC |

Broadcom BCM57800 NetXtreme II 1/10-Gigabit Ethernet Mellanox ConnectX-4 Infiniband |

cn-c[31-33]

| Model | 3x Dell PowerEdge R630 |

| Processor | 2x 12-core 1.80 GHz Intel Xeon E5-2650Lv3 w/ 30720 KB cache |

| Memory | 512 GB RAM @2133 MT/s |

| Scratch storage | 931 GB |

| NIC |

Intel 82599ES 10-Gigabit Ethernet Mellanox ConnectX-3 Infiniband |

cn-c30

| Model | Dell PowerEdge R730 |

| Processor | 2x 12-core 2.30 GHz Intel Xeon E5-2670v3 w/ 30720 KB cache |

| GPUs | 2x Nvidia Tesla M60 w/ 8GB VRAM |

| Memory | 256 GB RAM @2133 MT/s |

| Scratch storage | 3.8 TB |

| NIC |

Broadcom BCM57416 NetXtreme-E 10-Gigabit Ethernet Mellanox ConnectX-3 Infiniband |

cn-c[20-24]

| Model | 5x Dell PowerEdge R730 |

| Processor | 2x 10-core 2.60 GHz Intel Xeon E5-2660v3 w/ 25600 KB cache |

| Memory | 128 GB RAM @2133 MT/s |

| Scratch storage | 203 GB |

| NIC |

Broadcom BCM57800 NetXtreme II 1/10-Gigabit Ethernet Mellanox ConnectX-3 Infiniband |

cn-c[10-17]

| Model | 8x Dell PowerEdge R730 |

| Processor | 2x 10-core 2.60 GHz Intel Xeon E5-2660v3 w/ 25600 KB cache |

| Memory | 64-128 GB RAM @2133 MT/s |

| Scratch storage | 193 GB |

| NIC |

Broadcom BCM57800 NetXtreme II 1/10-Gigabit Ethernet Mellanox ConnectX-3 Infiniband |

24 Dell Intel Sandy-bridge/Ivy-bridge compute nodes:

cn-b[01-12]

| Model | 12x Dell PowerEdge C6320 |

| Processor | 2x 8-core 2.60 GHz Intel Xeon E5-2650v2 w/ 20480 KB cache |

| Memory | 64 GB RAM @1866 MT/s |

| Scratch storage | 338 GB |

| NIC |

Intel I350 Gigabit Ethernet Mellanox ConnectX Infiniband |

cn-a[20-26]

| Model | 7x Dell PowerEdge R620 |

| Processor | 2x 8-core 2.90 GHz Intel Xeon E5-2690 w/ 20480 KB cache |

| Memory | 256 GB RAM @1600 MT/s |

| Scratch storage | 203 GB |

| NIC |

Broadcom NetXtreme BCM5720 Gigabit Ethernet Mellanox ConnectX Infiniband |

cn-a[11-15]

| Model | 2x Dell PowerEdge R620 |

| Processor | 2x 8-core 2.60 GHz Intel Xeon E5-2670 w/ 20480 KB cache |

| Memory | 128 GB RAM @1600 MT/s |

| Scratch storage | 203 GB |

| NIC |

Broadcom NetXtreme BCM5720 Gigabit Ethernet Mellanox ConnectX Infiniband |

The cluster is running Redhat-based Rocky Linux 8 and 9 operating system (60% EL8 and 40% EL9).

The batch queue environment is Slurm Workload Manager.

Popular software include:

Matlab (2023a, 2023b, 2024a, 2024b, 2025a, 2025b)

Mathematica (12.3, 13.3, 14.3)

Ansys (2019r3, 2020r2, 2021r2, 2022r2, 2023r2, 2024r2, 2025r2)

AnsysEDT (2023r2, 2024r2)

StarCCM+ (15.06, 16.06, 17.04, 18.06, 19.06)

Gurobi (8.1, 9.1, 9.5, 10.0, 11.0, 12.0)

Intel OneAPI (2022, 2024, 2025)

NVHPC (24.x, 25.x)

GCC 5.x - 15.2

LLVM 14 - 20

Cuda 11.x - 13.x

The OSU College of Engineering maintains a shared High Performance Computing Cluster (HPCC) for research use. The cluster currently consists of a heterogeneous mix of about 130 compute nodes providing over 5500 CPUs, over 250 GPUs, and over 52 TB RAM. The cluster is highlighted by the recent addition of 4 Nvidia DGX H100/H200 systems, each with 112 cores, 8 Hopper H100 or H200 GPUs, 2.0 GB RAM and 25 TB local scratch space, and our 5 Nvidia DGX-2 systems, each with 48 cores, 16 Tesla V100 GPUs, 1.5 TB RAM and 27 TB local scratch space. The cluster also has 38 additional GPU servers which include the H200, L40S, A40, Tesla V100, Tesla T4, Quadro RTX 6000, and Quadro RTX 8000 GPUs. All servers are connected to both the primary engineering network as well as a second private high speed network for improved performance of parallel jobs. Most of the recent additions to the cluster are equipped with HDR and EDR Infiniband high speed network connections. There is about 500 TB of high speed shared disk space available for cluster job use, served from a DDN AI400x2 Exascaler system. All computer resources are stored in a temperature controlled server room, which is protected with both a UPS for short term power fluctuations and a diesel motor generator for long term power outages.